Key Takeaways

- Define PING clearly before you evaluate enterprise AI platforms.

- Compare governance, integration, and security before choosing an approach.

- Use stable frameworks to reduce model, data, and compliance risk.

- Prioritise measurable workflows over generic AI experimentation.

- Map PING requirements to document-heavy business processes first.

PING can mean different things in different technical contexts, so buyers often struggle to turn the term into a practical enterprise AI decision. In enterprise settings, PING usually matters less as a standalone buzzword and more as a signal of how systems connect, respond, and operate under governance. This guide explains how to interpret PING in enterprise AI, where it fits in modern architecture, and how to assess platforms, controls, and workflows that deliver real business value.

Therefore, if your team is researching PING because you want faster automation, better document intelligence, or safer AI deployment, the right question is not just “what is PING?” It is “what capability does PING point to in our stack, and how do we operationalise it securely?”

What does PING mean in enterprise AI?

PING is not a standard enterprise AI category on its own. However, teams often use it informally when discussing responsiveness, connectivity checks, orchestration signals, or lightweight service-to-service communication. In practice, that means the term usually sits inside a wider conversation about AI platform reliability, agentic workflows, API health, and system observability.

For example, a data team may use PING to describe a simple availability check between an orchestration layer and a model endpoint. In addition, an operations team may use it more loosely to describe whether an AI service is reachable, responsive, and ready to process work. That is why enterprise buyers should anchor the discussion in architecture, not jargon.

PING in enterprise AI architecture

In most organisations, PING-related discussions show up in five places. Each one affects performance, governance, and user trust.

- Endpoint health: checks whether a model, vector service, or workflow endpoint is reachable.

- Latency monitoring: measures response time across AI pipelines and integrations.

- Workflow orchestration: signals when one service should trigger the next action.

- Agent supervision: confirms whether an agentic task completed, failed, or needs escalation.

- Operational resilience: supports alerting, retries, and failover in production systems.

Furthermore, these functions matter most when AI moves beyond chat demos into regulated, document-heavy operations. That includes claims handling, supplier onboarding, contract review, KYC, invoice processing, and policy administration.

Why PING matters for enterprise AI buyers

Enterprise teams rarely buy “PING” as a product. Instead, they buy platforms and controls that make AI systems observable, governable, and dependable. As a result, the term becomes useful only when it helps define evaluation criteria.

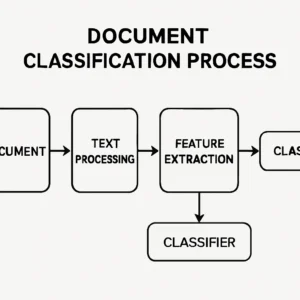

For example, if your AI workflow depends on document ingestion, classification, extraction, retrieval, and human approval, every handoff needs clear status signals. Without that, teams cannot diagnose failures quickly. In addition, they cannot prove service quality to risk, compliance, or business stakeholders.

Key evaluation questions

- Can the platform monitor model endpoints and workflow steps in real time?

- Does it support retries, escalation paths, and human-in-the-loop controls?

- Can it log prompts, outputs, and decisions for audit purposes?

- Does it integrate with identity, SIEM, and enterprise data platforms?

- Can it enforce policy across multiple model providers?

- Does it support document-centric workflows, not just chat interfaces?

PING and AI governance: the non-negotiables

However, responsiveness without governance creates risk. If a system can answer quickly but cannot explain, log, or constrain its actions, it is not enterprise-ready. That is why PING-related architecture should sit inside a formal governance model.

Start with the NIST AI Risk Management Framework 1.0. It gives teams a practical structure for govern, map, measure, and manage activities. In addition, align controls to ISO/IEC 42001:2023 for AI management systems and to ISO/IEC 27001 for information security management.

Therefore, if your use case affects individuals, review the EU AI Act Article 6 risk classification logic and the decision-rights implications of GDPR Article 22. For application security, use the OWASP LLM Top 10 and map adversarial behaviours to MITRE ATLAS.

PING vs broader enterprise AI capabilities

| Capability area | What it does | Why it matters more than “PING” alone |

|---|---|---|

| Observability | Tracks latency, failures, throughput, and endpoint health | Gives measurable operational insight |

| Agent orchestration | Coordinates tasks, tools, approvals, and retries | Turns signals into business outcomes |

| Document intelligence | Classifies, extracts, validates, and routes content | Connects AI to high-volume enterprise work |

| Governance | Applies policy, audit trails, and access controls | Reduces legal and operational risk |

| Model management | Supports multiple providers and deployment patterns | Avoids lock-in and improves resilience |

| Knowledge retrieval | Grounds outputs in approved enterprise content | Improves accuracy and trust |

In short, PING is best treated as one small operational signal inside a much larger enterprise AI system. Buyers should optimise for business process performance, not for terminology.

How to evaluate a PING-related enterprise AI stack

- Define the business workflow first, including inputs, outputs, approvals, and service levels.

- Identify where PING-like signals matter, such as endpoint health, retries, and escalation triggers.

- Choose the deployment pattern, including cloud, hybrid, or private infrastructure.

- Assess model strategy across frontier reasoning models, open-weight models, and small task-specific models.

- Validate governance against NIST AI RMF, ISO/IEC 42001, GDPR, and the EU AI Act.

- Test observability, logging, and incident response before production rollout.

- Measure business KPIs such as cycle time, exception rate, and human review load.

For example, many enterprises now build on platforms such as Microsoft Azure AI Foundry, AWS Bedrock, Google Vertex AI, Databricks Data Intelligence Platform, and Snowflake AI Data Cloud. In addition, teams often use NVIDIA NIM and Hugging Face to support model serving, evaluation, and open ecosystem workflows.

Common mistakes when teams search for PING

Treating PING as a product category

Many teams assume PING refers to a discrete AI tool they should buy. However, it usually points to a technical function inside a wider platform. That misunderstanding leads to poor vendor shortlists and vague requirements.

Ignoring document-heavy use cases

Some teams focus on chatbot demos first. In contrast, the strongest returns often come from document-intensive workflows with clear rules, high volume, and measurable delays. That is where observability, orchestration, and governance create immediate value.

Overlooking compliance and auditability

Fast responses do not equal safe automation. Therefore, enterprises need logging, policy controls, human review, and traceability from day one. This matters even more when outputs influence customer decisions, financial actions, or regulated records.

Where PING fits best in real-world operations

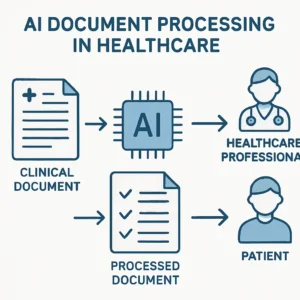

PING-related capabilities matter most when AI must coordinate multiple systems reliably. For example, an intake workflow may receive a document, classify it, extract fields, validate exceptions, query a secure knowledge base, and route the case for approval. Every stage needs status visibility.

As a result, the best enterprise AI designs combine model flexibility with strong workflow controls. They use leading multimodal models where needed, smaller specialised models where efficient, and retrieval systems grounded in approved content. They also keep humans in the loop for exceptions and high-impact decisions.

Making It Operational

Contellect helps enterprises turn AI concepts into governed document workflows. Its platform combines IDP, AI-powered data extraction, automated document classification, secure RAG knowledge bases, and agentic AI workflows in a model-agnostic architecture.

In addition, teams can connect document management, metadata intelligence, e-signature, and enterprise integrations without stitching together disconnected tools. To see how that works in practice, explore the platform or request a demo.

Frequently Asked Questions

What is PING in enterprise AI?

PING in enterprise AI usually refers to a signal about connectivity, responsiveness, or service health rather than a standalone product category. In practice, teams use it when discussing endpoint checks, workflow status, and orchestration events across AI systems. The useful question is how that signal supports reliable business processes.

How does PING relate to AI observability?

PING is one small part of AI observability. It can show whether a service is reachable or responsive, but observability goes further by tracking latency, failures, throughput, logs, and decision traces. For enterprise AI, you need both simple health signals and deeper operational monitoring.

Why does PING matter in document automation?

PING matters in document automation because workflows depend on many handoffs between services. A document may need classification, extraction, validation, retrieval, and approval. If one step fails silently, the whole process slows down. Clear status signals help teams detect issues quickly and maintain service quality.

When should a business care about PING in AI systems?

A business should care about PING when AI moves into production and supports real operations. That includes customer onboarding, claims, finance, procurement, and compliance workflows. Once service levels, auditability, and exception handling matter, PING-like signals become important for reliability and governance.

Is PING a feature to ask vendors about?

Yes, but only in context. Ask vendors how they handle endpoint health, retries, orchestration status, logging, and alerts rather than asking about PING in isolation. That approach gives you a clearer view of whether the platform can support enterprise AI workloads safely and at scale.